About Nvidia DIGITS

GPU has high efficient on training model especially for Deep Neural Network. Honestly, training model is not an easy task that we need to prepare datasets, choose network and config lots of parameters. It is a big challenge for you don’t have much experience with programming and frameworks like Python, Py-torch. Nvidia DIGITS is a web platform which allows us train model with user friendly GUI without coding. DIGITS simplifies common deep learning tasks such as managing data, designing and training neural networks on multi-GPU systems, monitoring performance in real time with advanced visualizations, and selecting the best performing model from the results browser for deployment. DIGITS is completely interactive so that data scientists can focus on designing and training networks rather than programming and debugging.

In this article, I’ll use MNIST dataset and LeNet network to train a model which can classify numbers. Training process can be divided into three steps:

- Import Dataset

- Training

- Testing and analysing

Now we start. If you are confused with how to install DIGITS, please go to the document.

Import Dataset

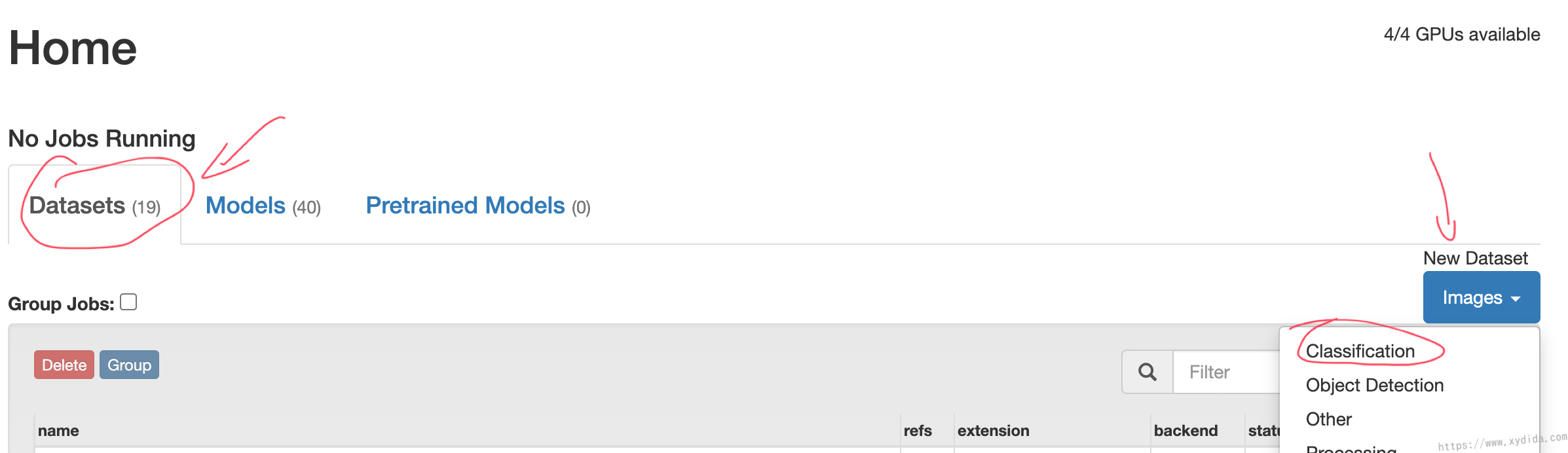

At DIGITS home page, choose Datasets and create new classification dataset:

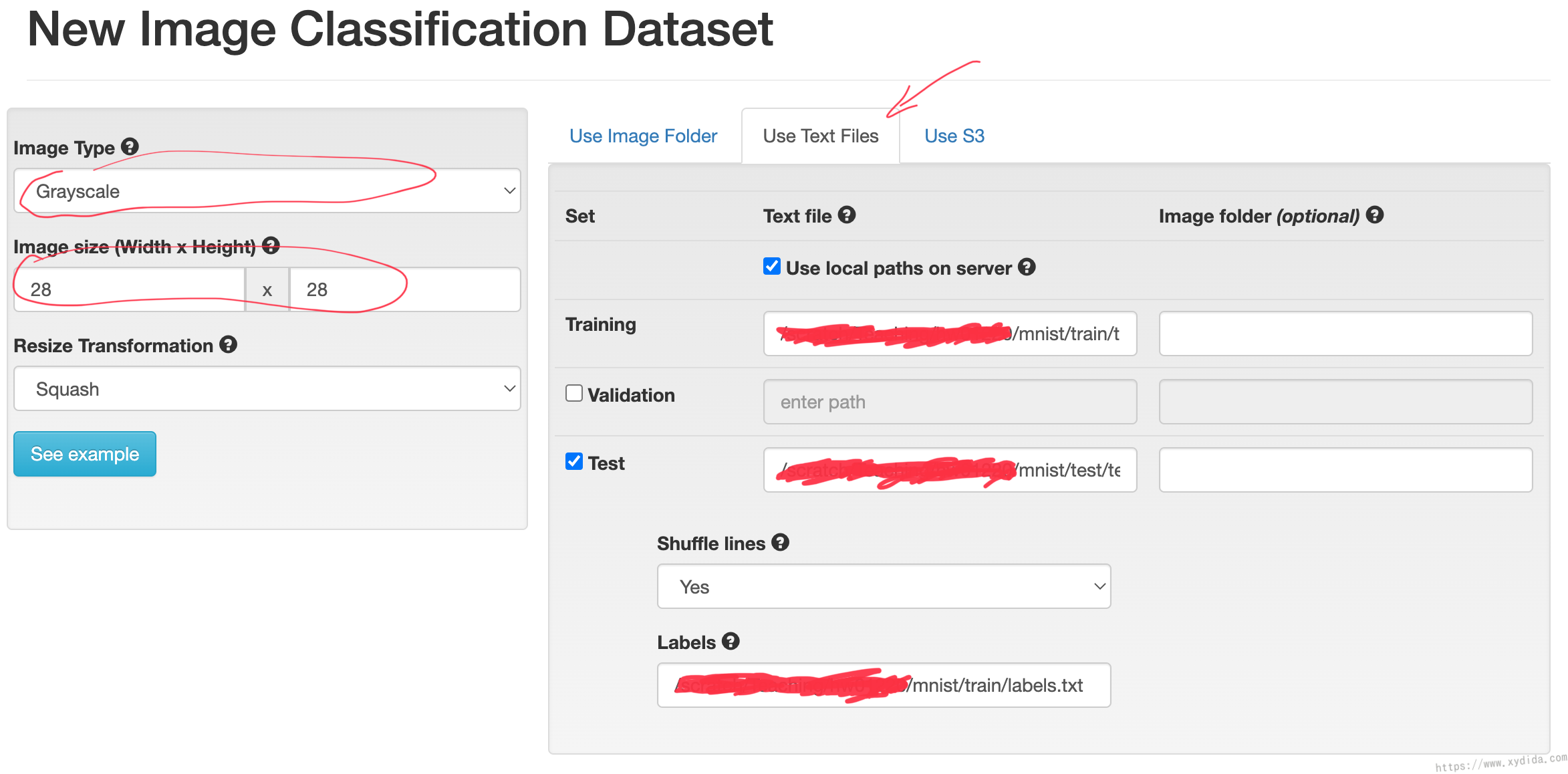

Then, we need to config our classification dataset which is MNIST.

All of the images in MNIST dataset are grayscale with 28x28 pixels. We use “User Text Files” to tell DIGITS where our dataset files are.

Training, Test and Label fields are the absolution location in our server, i.e.

1 | /a/b/mnist/train/train.txt |

We don’t need to validate when training so uncheck validation.

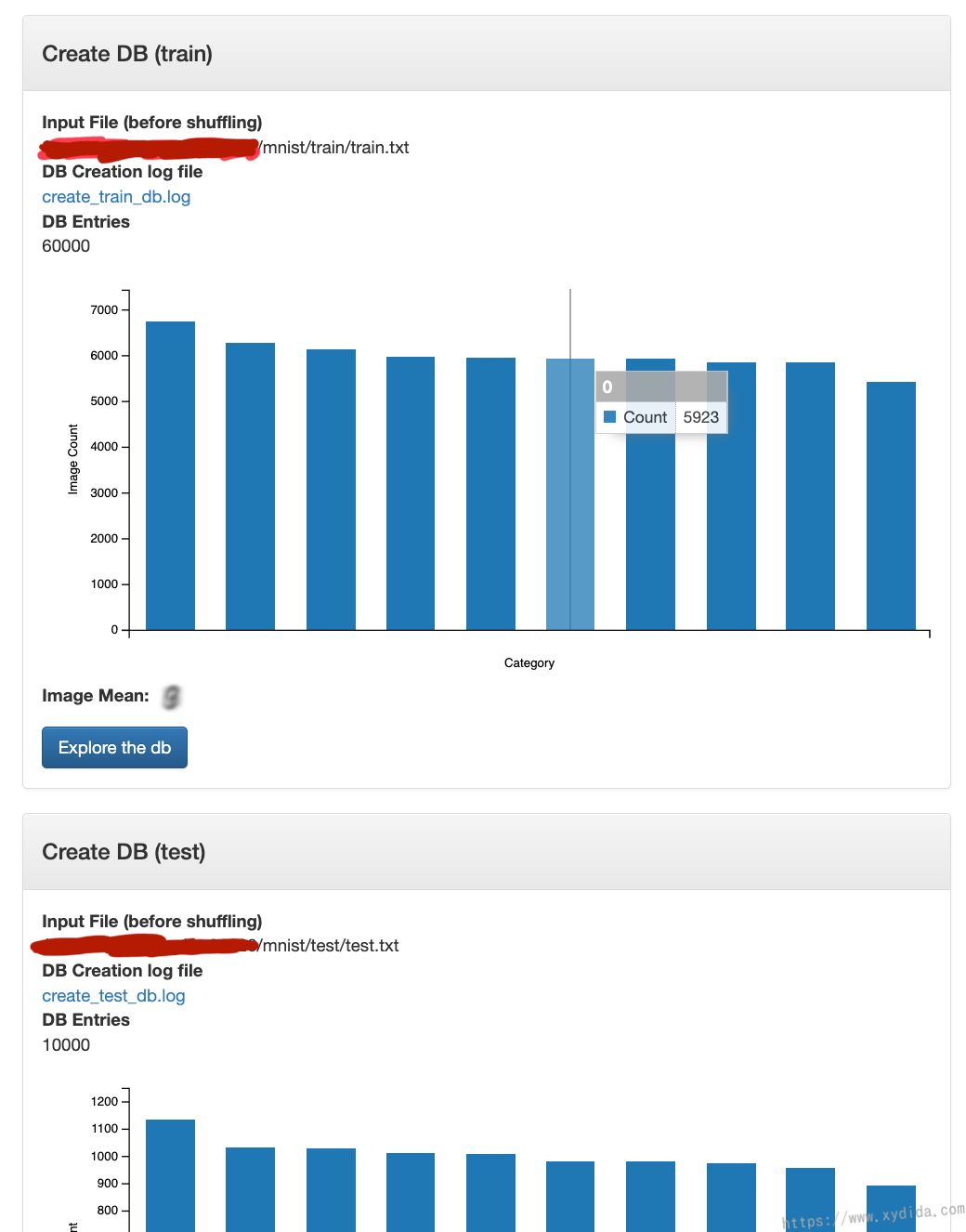

Input your dataset name and create. It usually takes several minutes to create the dataset from the 70k images in MNIST. If successful, we can the result:

Training

Now let’s start to training a CNN called “LeNet” to recognize the numbers 0-9. This simple and popular CNN architecture has been a preset in DIGITS so is easy to try.

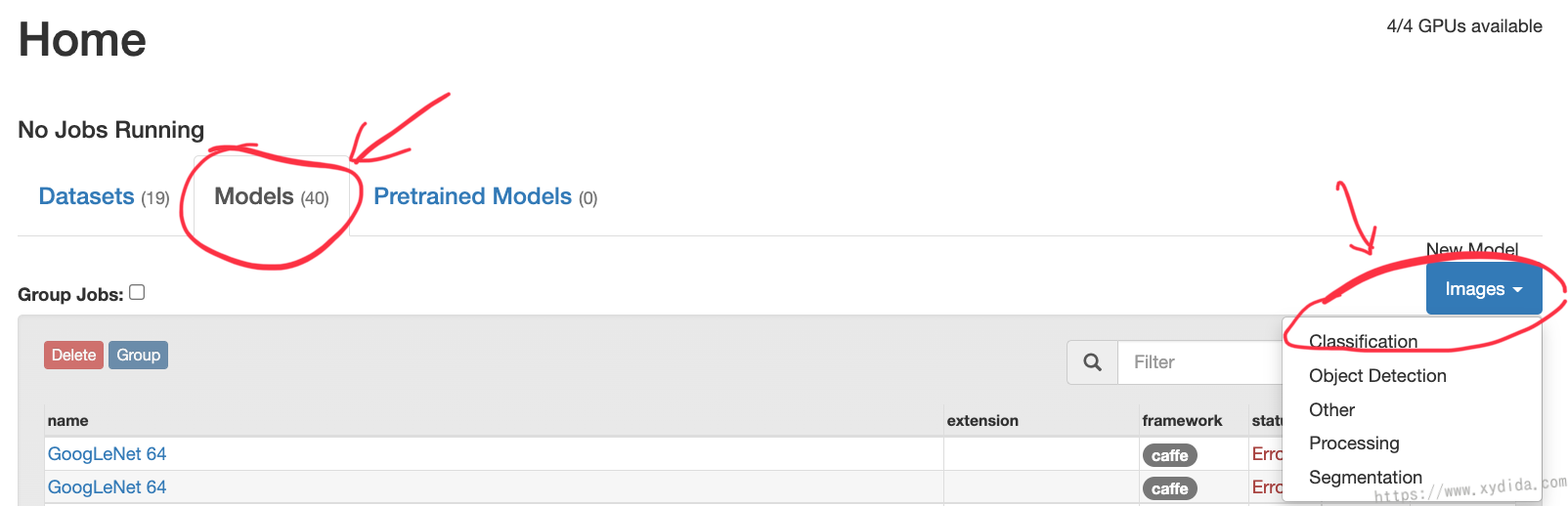

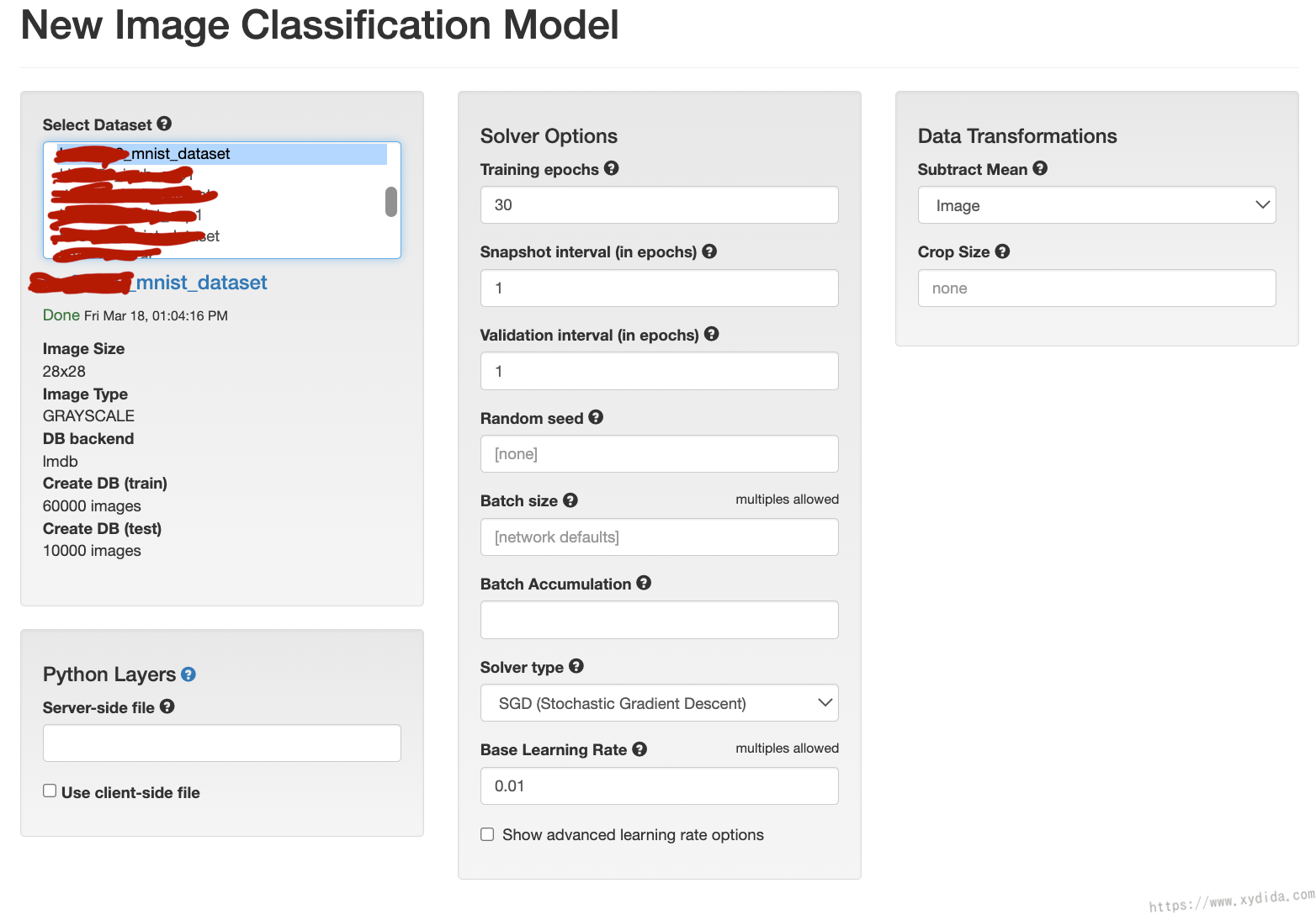

Choose “Models” and select “Classification” at home page.

Here, choose the dataset we have created. In solver options, many configurations such as epochs, learning rate, but now leave them default.

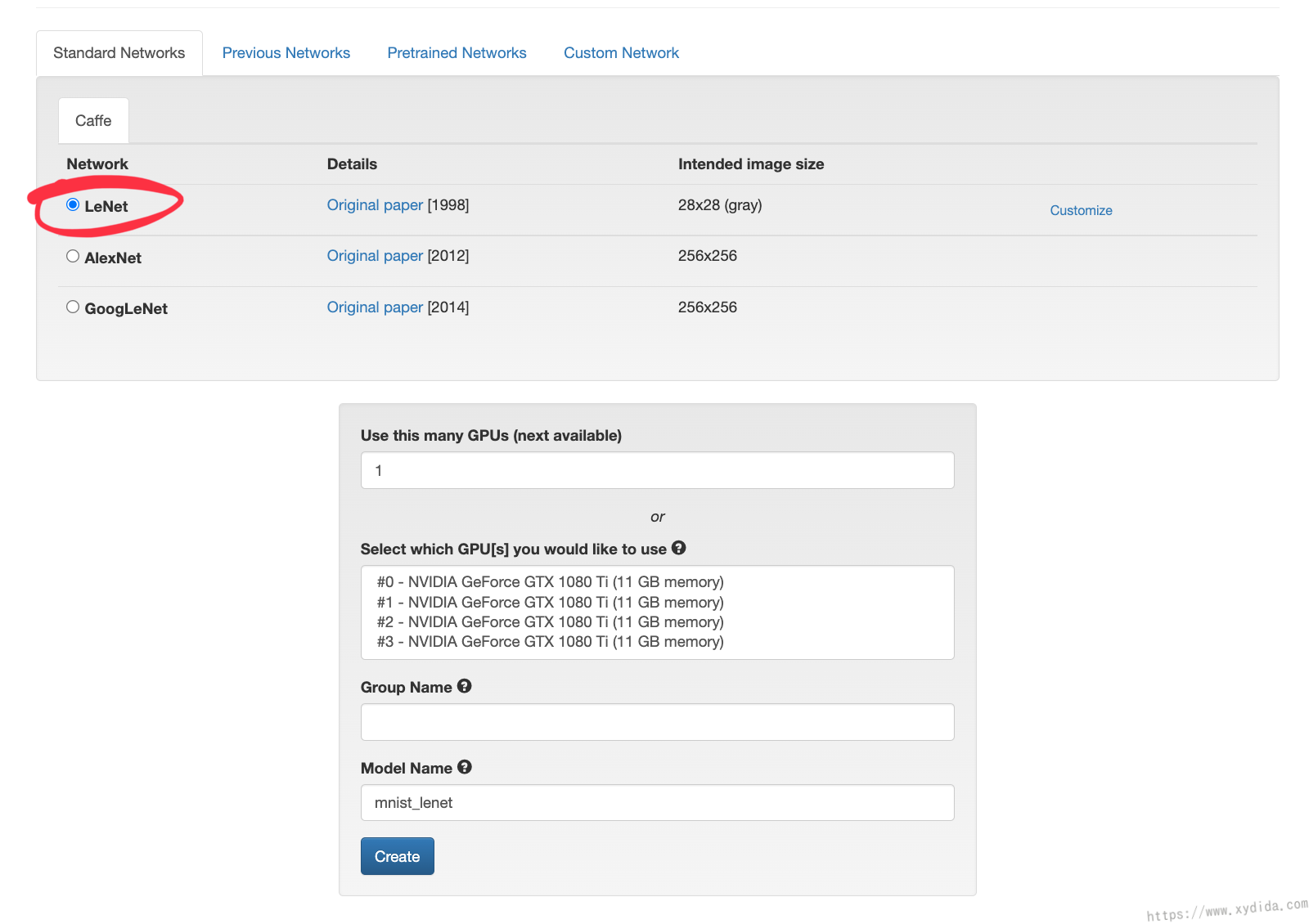

The most import step is to choose which network we will use, in this example, LeNet is our training network which is a built-in network in DIGITS.

The training process will start after creating training model. In our experiment, it only three minutes to train thanks to the high performance GTX 1080 ti.

Testing and analysing

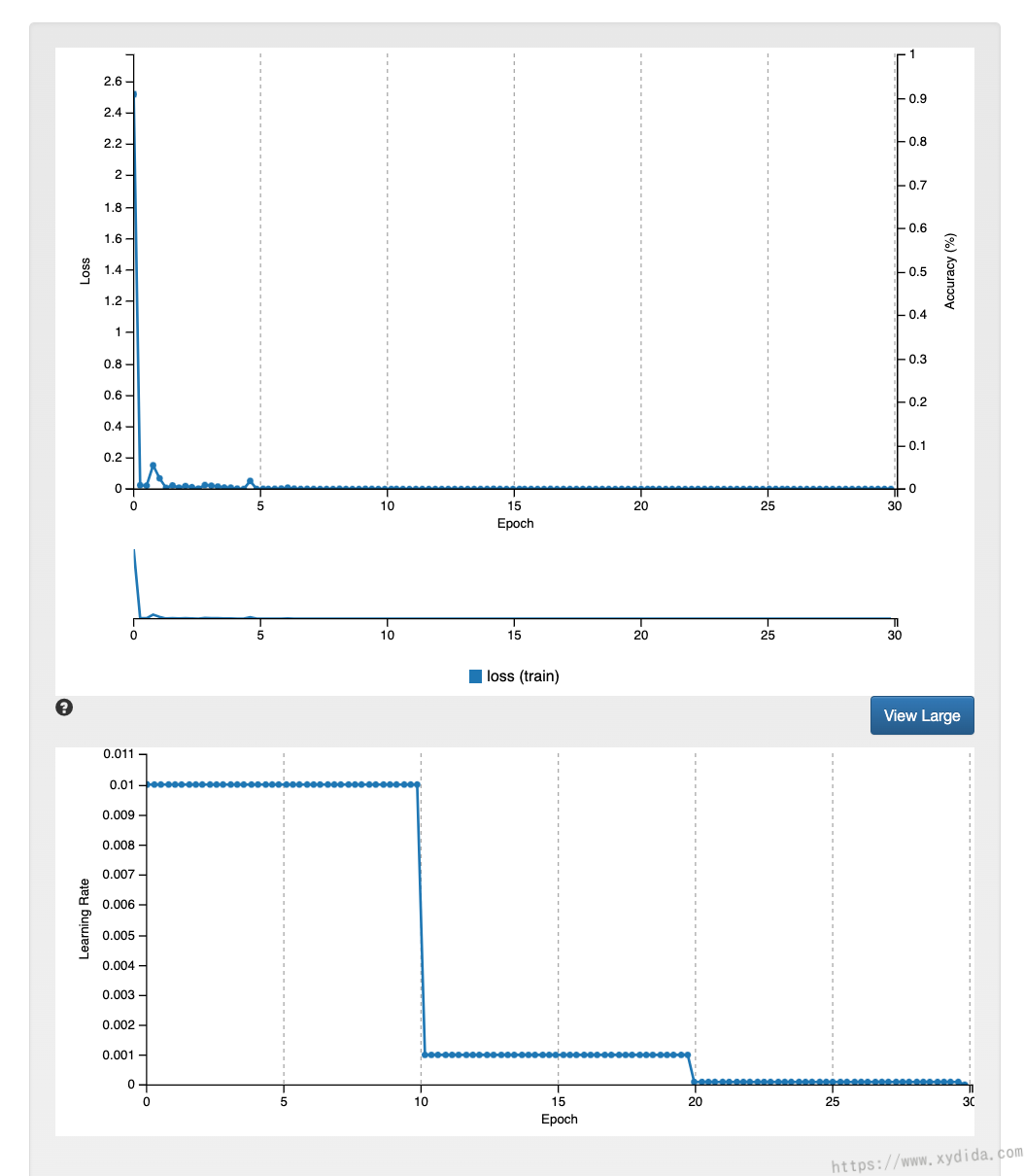

The first graph is a plot of something called the training loss. The second is a plot of the learning rate. LeNet CNN model here is a supervised training model, a classifier (here, a CNN) is shown many examples of images and their correct labels e.g. a picture of a 2 and the label 2. By iterative training, the weights in network are adjusted to minimise the results of “loss function”. In our example, the training loss becomes very low after 4 epochs. It is clear that 40 epochs training are excessive.

Learning rate is a critical factor in get CNN to train correctly. In this example, the base learning rate is 0.01, if too small, the CNN will take a very long time to train. If too big, the CNN will converge at a high loss.